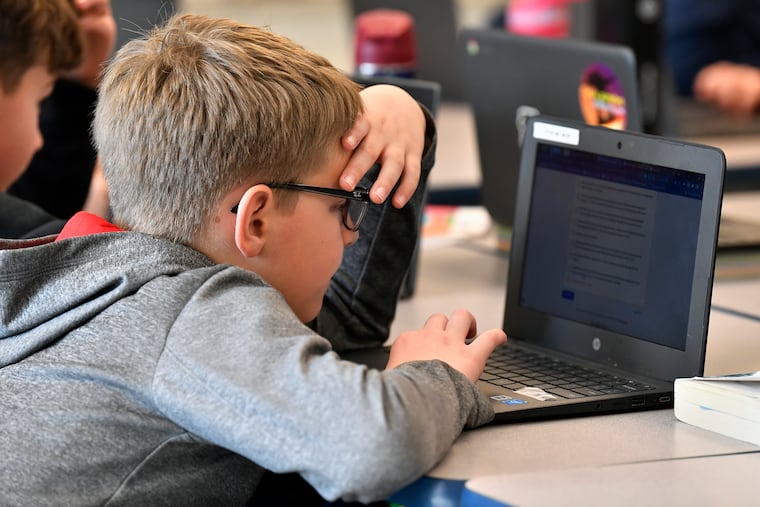

AI is reshaping childhood. Here are the risks and benefits parents should know about, according to CHOP researchers.

A recent CHOP review article summarized what's currently known about the risks and benefits of children using AI.

Artificial intelligence presents a mixed bag of risks and benefits for children that vary by age, according to Children’s Hospital of Philadelphia researchers who reviewed dozens of academic studies on the emerging technology.

For young children, an AI chatbot could help with language development, yet it could also distort their perceptions of social interactions.

For adolescents, the technology could help with career exploration, but its record of inappropriate responses to mental health matters raises concerns.

The researchers summarized the current evidence on generative AI — tools that imitate human intelligence to produce content in the form of text, audio, images, or videos — in a review article published Wednesday in the medical journal Pediatrics. They reviewed 55 published works largely released in the last five years, including nearly three dozen peer-reviewed studies and a mix of news articles, blog posts, and pending legislation.

They separated the potential effects across early childhood (ages 0 to 5), middle childhood (6 to 11), and adolescence (12 and older) to lay out the considerations for families.

Guidance for parents on how AI might reshape childhood remains limited, despite its rapid spread into children’s learning and play, said Robert Grundmeier, a primary care pediatrician at the Children’s Hospital of Philadelphia and the lead author.

Nearly two-thirds of teens use chatbots, like ChatGPT or Gemini, with 28% doing so daily, according to a Pew Research Center survey last year. They are using the tools for everything from searching for information to getting help on homework and having a digital companion to chat with.

“Our children are getting exposed to AI at incredibly young ages, well before they have a smartphone,” Grundmeier said.

The article was what’s called a “state-of-the-art review,” meaning it covers a topic that is rapidly changing, and for which there’s not yet a lot of rigorous research, he said.

He hopes other researchers will dig deeper into the area “so that we can actually start to, in the future, make some concrete recommendations about best practices.”

The Inquirer spoke with Grundmeier about what parents should know about children’s use of generative AI in a conversation lightly edited for clarity and length.

What are the takeaways of your review?

There’s a lot of opportunity, clearly in the educational domain, in helping to really creatively tailor and customize educational materials.

One of the biggest concerns that came up had to do with the reliance on artificial intelligence as a companionship tool. You can interact with it in a way that you might a friend. And there are some nice things about that, in terms of being able to explore ideas in a non-judgmental way. But I think there’s a tremendous concern, especially from a child development perspective, that children could learn incorrect mental models of human interaction.

How might interacting with AI differ?

AI tools are typically designed to promote engagement. While a human might challenge your ideas and push back — friends do it all the time — an AI tool is typically a little less likely to push back and challenge you in a way that might make you unhappy with the interaction.

There’s more nuance in the human interaction.

What are the potential risks and benefits of AI in early childhood?

There’s a lot of opportunity for creativity, storytelling, and supporting language development that could be a really nice benefit of AI in preschool-aged children. The concern regarding incorrect mental models and not correctly understanding what a human interaction is meant to be like is really most notable, however, in this age category.

It’s really essential that a parent always remains involved in any AI interactions, looking at the output from AI alongside their child, and preferably prescreening what’s being generated to make sure their young child is not accidentally exposed to any harmful content.

What about for school-age children?

There’s a lot more opportunity to personalize education to people’s different learning styles.

But similarly, there are definitely school rules that have to be followed on the appropriate use of AI. To the extent parents can start to promote an idea of AI literacy and make sure that their child is not handing over their learning to the AI, then I think there’s a lot of good opportunity there.

We want to promote skill development, not cause people to have their skills atrophy because they’re relying on the AI to do their homework.

What are the considerations for adolescents?

There are social interaction concerns. We reference some of the news related to problems with teenagers using AI tools as a companion or a friend. In particular, there was some research that showed that AI tools may respond very inappropriately to questions about mental health topics, including suicide. There really needs to be a lot of guardrail development on the part of the AI vendors to make sure that teenagers do not have harmful interactions with AI.

What are potential benefits of adolescents using AI?

AI is here to stay as part of our futures and our professional careers. To the extent that AI literacy can be supported in the adolescent age group, so that they can enter the workforce as a professional who knows how to use AI appropriately, I think that’s a worthwhile educational effort.

It can also be a valuable tool for career exploration and college choice. There’s a lot of information about different colleges and career paths, and AI tools are good at summarizing, synthesizing, and interpreting something in light of what you might say are your priorities.

Is there anything that you feel is still uncertain or needs to be clarified through future research?

The manner of interacting with AI keeps changing. For example, various household ambient AI tools (devices that passively listen to us) have been in existence for a while, but now the types of interaction have become much more complicated. We need to understand what are safe and effective ways to use these tools in the household in a way that’s supportive of child development.

Another category of research that is really important is developing guardrails, evaluating them, and making sure that they’re adapted appropriately for different age stages.

As a pediatrician, what have you been hearing about AI from parents?

I was chatting with the family of an elementary school-aged child about school performance, and the mom indicated some difficulties supporting his reading comprehension. They had discovered, with support from his school, that they could use AI tools to create reading comprehension paragraphs that they could practice with at home to help their child learn how to really focus on their reading. I thought that was actually a fantastic example.

What I’m struck by is really the creativity that families are approaching this with. There’s a lot of good opportunity there, as long as we pay attention to the risks and make sure guardrails are in place appropriately.