The ‘secretary of war’ and a tech CEO shouldn’t be the only ones debating ethical use of AI | Editorial

Congress should issue legally binding guidelines for the use of artificial intelligence in war — including restrictions on mass surveillance and autonomous weapons.

Since the dawn of modern computing, there has been speculation that technology would someday outpace humanity’s ability to control it. Yet, for all those concerns, technology kept advancing, and such a scenario often felt far away. At least until artificial intelligence came onto the scene.

Now, the Trump administration is using AI to assist the war in Iran, and Defense Secretary Pete Hegseth has demanded that tech companies agree to collaborate with the military without guardrails. Swarms of autonomous killer drones are within reach, and President Donald Trump wants to control them without restriction.

What could possibly go wrong?

Last week, Hegseth unilaterally terminated the Pentagon’s partnership with Anthropic, the creator of Claude, considered the most highly regarded AI system available. The government asked for unfettered use of Claude, including for mass domestic surveillance, or to create weapons that kill without human input. CEO Dario Amodei refused.

The Trump-anointed secretary of war responded by designating Anthropic as a supply-chain risk to national security. A move that would ban all defense contractors from using Anthropic products. In effect, Hegseth is trying to force an American company to do work it did not agree to take on; work that can potentially be used to violate the law and Americans’ constitutional rights.

The move comes not long after public acknowledgment that the military used Claude to help plan the capture of Venezuelan President Nicolás Maduro. The AI technology is also being used in the bombing campaign against Iran. One of the stated uses is “target identification.” During U.S. and Israeli strikes against Iran, an elementary school that neighbors an Iranian naval base was struck, resulting in the deaths of dozens of children. While the U.S. has yet to confirm responsibility, the incident is a clear sign to exercise caution, not to forge ahead recklessly.

Anthropic has benefited from the clash with the administration, as many people who oppose the president’s policies look to reward anyone who stands up against Trump. Claude overtook competitor ChatGPT for the first time in user downloads, hitting No. 1 on Apple’s App Store. But Anthropic is no clear-cut hero.

» READ MORE: State and local officials are right to stand against expanding ICE detention centers | Editorial

In a statement, the company rightly underlined that “mass domestic surveillance of Americans constitutes a violation of fundamental rights.” But it also said it was willing to help the Pentagon in the future. It just doesn’t believe that today’s AI systems “are reliable enough to be used in fully autonomous weapons.”

Meanwhile, the United States is hardly the only country likely to develop the capacity to deploy autonomous lethal weapons. China’s leading AI firm, DeepSeek, is working with the People’s Liberation Army to create its own autonomous battle systems. Under Hegseth’s punitive order, DeepSeek is now treated more favorably than Anthropic.

Like nuclear weapons, artificial intelligence has become the subject of an international arms race. Both have the power to cause unprecedented death and destruction. Nations have reason to fear being left behind, and it may be too late to close Pandora’s box.

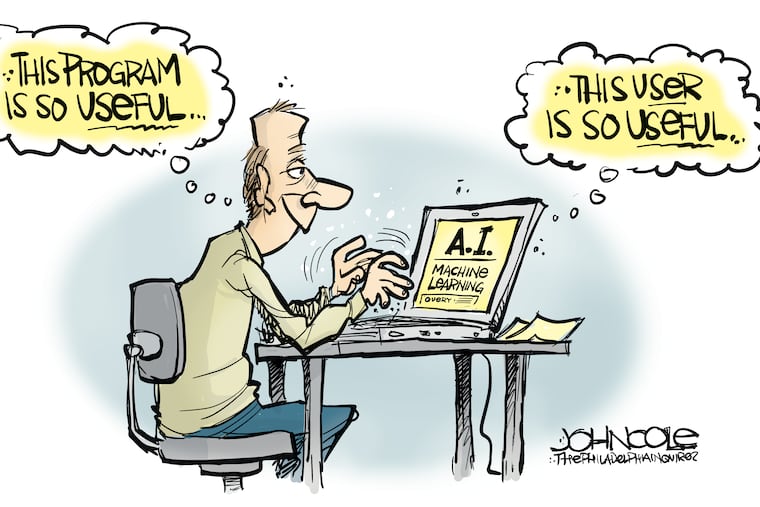

The debate is one of many around the growth of artificial intelligence. Americans are as concerned about the potential for job losses through creative destruction, the proliferation of data centers, and the potential for a less human future as they are excited about the possibility of widespread self-driving cars. Newsrooms, college campuses, and businesses are all grappling with how to use AI technology ethically and productively.

It is time for more lawmakers to join this complicated conversation.

» READ MORE: Trump’s war of choice with Iran makes a mockery of the Constitution | Editorial

Instead of leaving matters to a negotiation between Hegseth and a tech CEO, Congress should issue legally binding guidelines for the use of artificial intelligence in war — including restrictions on mass surveillance and autonomous weapons. Congress could also debate the usage of AI in other areas, like healthcare and education.

It is distinctly possible that the systems will improve outcomes for some people. AI has detected cancers that human doctors missed, research has shown that Waymo is significantly safer than human drivers, and AI has enabled newsrooms to restore coverage to smaller cities and towns that have struggled to sustain their own outlets.

At the same time, even the best AI assistants can still hallucinate facts and make mistakes. That’s embarrassing when it happens on a term paper or a court filing; it can be deadly on the battlefield.

Above all, human control over lethal weapons is essential. Echoing the nuclear proliferation treaties that benefited humanity in the 20th century, the United States should lead the way in assembling a broad coalition of powerful nations that agree to ban fully autonomous weapons.

In the meantime, Trump and Hegseth’s decision to spurn Anthropic points the way toward a 21st-century disaster.

Inquirer Opinion Newsletter