Getting robots of war to act more naturally

Researchers look to swarms and colonies.

The next generation of military robots won't just be humanoids like the Terminator.

The robots of the future will likely work in concert, like a swarm of ants. Others may creep like spiders or hover like hummingbirds, if the work at the University of Pennsylvania is an indication.

Most of the more than 5,000 robotic devices now in Iraq are remote-controlled, sniffing out explosives or performing other jobs with constant human instructions.

But in a shift with huge implications for warfare and diplomacy, the military wants robots that act on their own volition. It's a prospect that promises to save lives, but could also make wars more palatable and easier to start, some experts say.

"The key [to the new robots] is increasing autonomy," said Joseph Mait, senior technical researcher at the U.S. Army Research Lab in Adelphi, Md. "Now we have several soldiers per robot, but in the future we'd like to have one soldier with many robots."

In one futuristic scenario, Mait said, soldiers faced with kicking down a door would instead send in a team of robotic scouts with specialized roles. "One may be a flier, two others crawlers, and yet another may have the ability to perch," providing perspective from above, he said.

To realize ideas like these, the military is offering millions of dollars in grants to the country's premier research labs. Two were awarded this spring to engineers at Penn's robotics lab - known as GRASP (General Robotics, Automation, Sensing and Perception). Last spring, the group won the biggest grant in the engineering department's history - $22 million - to make robotic versions of ants, flies, cockroaches, or some combination of insects capable of self-direction and cooperation.

In a recent demonstration at the GRASP lab, an octet of wheeled robots the size of paint cans circled and closed in on an L-shaped block, and then ferried it away in minutes, like ants with a picnic scrap. Each robot drinks in a stream of data on its neighbors' movements, and then acts on the information spontaneously.

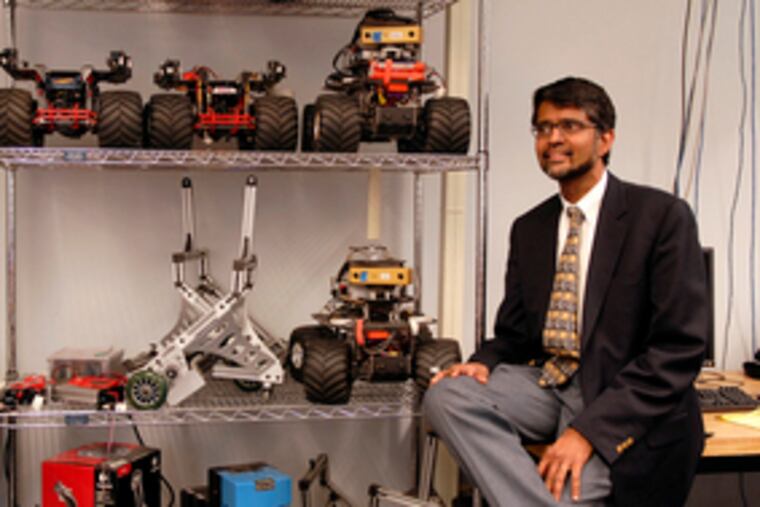

"We're following nature," said Penn engineering professor Vijay Kumar. In his office, he reaches for a copy of National Geographic, showing a colony of black ants, crawling over one another and creating a bridge with their bodies.

"Each ant is pretty stupid," he said. But something in their communication allows them to kill prey and move objects many times their size.

One key feature of ant colonies is that they are decentralized, he said. "Ants and bees don't have to be controlled."

Another insect trait researchers are trying to copy is how worker bees are replaceable. The destruction of one or two won't hold back the swarm.

Some of the smartest-acting swarms have no leader, Kumar said, and no specialized roles. Instead, they follow a few rules that add up to complicated behavior.

The researchers aren't limiting themselves to ants, said Penn engineer George Pappas. They also are looking at wolves, dolphins and humans to see how different robots might cooperate.

In one wing of the lab, graduate students Nathan Michael and Jonathan Fink were testing rolling robots the size of toy trucks.

The hard part is figuring out what simple rules the robots should follow, Michael said. "We measure everything that's happening at an excruciating level of detail."

Scientists are already used to thinking of swarm behavior as potentially intelligent.

"Intelligence is not the sole province of human beings," said robot engineer Ron Arkin of Georgia Tech, who is collaborating with Penn professors. A desert ant, he said, is smart at living in the desert.

In another imitation of nature, one company is developing robots that fuel up like animals. The idea is to make them extract energy from foliage, said Robert Finkelstein, president of Robotic Technology Inc. in Potomac, Md.

Arkin said the demand for robots' independence comes partly from the increasing speed of warfare. In World War II, he said, you saw a radar blip and had time to call the commander. "Now you have just minutes to make a decision."

In another part of Penn's robotics lab, a robot about the size of a Chihuahua negotiates a stretch of treacherous rubble. "That's Little Dog," said Kostas Daniilidis, an engineering professor at Penn.

The spindly-legged, headless device looked more insectlike than canine. Off to the side were some of Little Dog's other challenges - a stretch of fake cobblestones and something like a bumpy wooden bridge.

Daniilidis and the other Penn engineers say they envision lifesaving applications for their work. "Imagine you have something like ground zero," Daniilidis said. Robots faster than humans could sift through debris looking for survivors.

But could someone program robots to kill people?

"It's a very difficult question," Daniilidis said. "Engineers should be involved in the discussion because they can define where the autonomy boundary is."

John Pike, a weapons analyst and director of GlobalSecurity.org, said the engineers were being naive about the long-term use of their work.

"No one's really thought through where this might be heading, and you're certainly not going to get those nice engineering-school people to talk about it. . . . They only make nice robots."

Look at who pays for their research, he said, referring to military agencies. "The profession of arms is about killing the enemy." Most flesh-and-blood soldiers, he said, may be reluctant to kill. According to studies, he said, "two-thirds of the people who sign up for the military aren't capable of killing." They either fail to fire or simply spray bullets wildly.

Robots would have no such reservations, he said. "They will be stone-cold killers and they will be infinitely brave."

"The bad news is we'd be a rogue superpower going around blowing everyone up," he said. "On the other hand, we could end genocide" without having to sacrifice thousands of American lives.

Finkelstein of Robotic Technologies foresees robots replacing people in many commercial applications. He envisions robots driving cars, and far more safely than humans.

And other countries will develop fighting robots. "Then you're into a technological arms race," he said.

Georgia Tech's Arkin has been writing and speaking on what he calls robo-ethics. The most important consideration, he said, is to hold onto today's ethical principles - "what humanity has deemed ethical behavior."

That means the use of appropriate weapons - "not nuking people back to the Stone Age - and it means no unnecessary suffering." Technically, he said, robots could be programmed with certain constraints.

"I'm happy to assist our war fighters with the best technology we can deliver them," he said. "But I want to make sure we're not selling our souls in the process."