Cities are using data to make decisions more than ever. Are they calculating risks for bias?

As governments increasingly use data to operate more efficiently, researchers and analysts caution that computer-based decision-making isn’t perfect. It can lead to discrimination.

With one million properties and limited staff, New York City needs to be selective about the buildings it inspects for fire risk. So it relies heavily on data — including on past fires and building traits — to choose.

Chicago uses data to identify which children are most at risk of lead poisoning and how officials could intervene.

And in Philadelphia, Mayor Kenney’s administration has emphasized evidence-based decision-making. Last year, the city launched GovLabPHL, a multi-agency collaboration that uses studies of human behavior to shape how the city interacts with residents.

Municipal records are now digitized and easier to share among departments, so Philadelphia and other cities are centralizing data that agencies historically kept to themselves.

But as governments increasingly use that data to operate more efficiently, researchers and analysts caution that computer-based decision-making isn’t perfect and must be used with discretion.

In 2016, a ProPublica investigation explained how software used across the country to predict future criminal behavior was biased against black people. In a data analysis of a Florida county, the software falsely labeled black defendants as likely to re-offend at nearly twice the rate it did for white defendants. The same year, the Seattle Times reported on a flaw in LinkedIn’s search feature that suggested male names when users searched for female names.

“Data science is here to stay. It holds tremendous promise to improve things,” said Julia Stoyanovich, an assistant professor at New York University and former assistant professor in ethical data management at Drexel University. But policymakers, she said, need to use it responsibly.

“The first thing we need to teach people is to be skeptical about technology,” she said.

Data-review boards, toolkits, and software that cities, universities, and data analysts are starting to develop are steps in the right direction to spur policymakers to think critically about data, researchers said.

Philadelphia is setting standards for its departments' data collection and quality. Mark Wheeler, Philadelphia’s chief information officer in the Office of Innovation and Technology, said hearing Stoyanovich speak at a seminar this spring was “eye-opening" and helped inform the city’s current job listing for its next chief data officer.

How do municipal governments use data to make decisions?

Governments have long been using data and algorithms — series of instructions a human can give to a computing program, also called automated decision systems — mostly for “counting” tasks, such as processing payroll and tracking 311 calls. But in recent years, governments have started to use data and algorithms to deliver services, said Rayid Ghani, director of the Center for Data Science and Public Policy at the University of Chicago.

New York uses taxi trip data to decide how to regulate the fleet. Mapping data tell first responders the fastest ways to get to an emergency.

Philadelphia’s litter index maps trash hot spots to spur cleanups.

Philadelphia police analyzed data to launch a youth “diversion” program, in which students are often placed in after-school programs instead of jails for certain low-level crimes. In the first year, student arrests dropped by half.

During the administration of Charles H. Ramsey, Police Commissioner Richard Ross’ predecessor, the department realized it could use data for more than crime analysis and statistics when it tested the effect of foot patrols and saw a drop in crime, said Kevin Thomas, the Police Department’s director of research and analysis.

The department considered buying predictive algorithm software and tested a program that suggested where to put patrol vehicles, but officials didn’t see results, Thomas said. Such tools have not added value beyond what the department’s analytical teams can provide, he said.

“It’s not that I believe there is no place for" artificial intelligence, Thomas said. "What we’ve examined so far has been underwhelming in terms of applications toward crime incidents.”

How can data use go wrong?

“I think there is a lot of goodwill on the side of city government to try their best to use data responsibly," Stoyanovich said. "But these problems are very challenging.”

For example, data collected for one purpose may throw off results if used for a different purpose. Despite conventional wisdom, having more data doesn’t necessarily mean better outcomes. Policymakers need quality and the right type of data.

While efficiency is often the goal for municipal officials pushing for data-based decision-making, Ghani said, “it sometimes leads to disproportionate impact for different types of people.”

Andrew Nicklin, director of data practices at the Center for Government Excellence at Johns Hopkins University, said the ProPublica story about racial discrimination in predictive crime software spurred him to work with other researchers on a data toolkit to help government officials avoid unintended consequences.

According to the toolkit’s authors, “algorithms can harbor biases against disadvantaged groups or reinforce structural discrimination.”

“We started from the assumption that all people are biased, and therefore all data are biased, and therefore all algorithms are biased,” Nicklin said. “Algorithms are created by people. People have fallibility. That’s OK. We just have to acknowledge that. We just have to have a conversation about it.”

How are governments and researchers working to cut down on discriminatory outcomes?

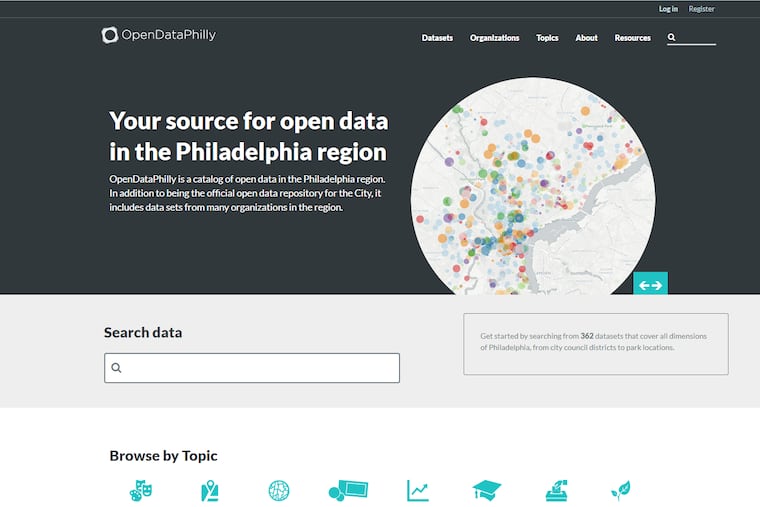

Cities such as Philadelphia, New York, and Cambridge, Mass., are turning to transparency as one solution. The cities' open data websites invite the public to examine information and cities' methods of interpreting it.

In May, New York convened a task force to study how the city uses data. By next December, its 18 members will submit recommendations on how the city should assess its automated decision-making “to ensure equity and opportunity,” according to the mayor’s office.

Research centers such as the AI Now Institute at New York University study social implications of artificial intelligence.

University researchers and data scientists are writing or updating codes of conduct, including “Community Principles on Ethical Data Practices.” One principle states: “Acknowledge and mitigate unfair bias" by sharing data processing methods and disclosing bias in algorithms.

In Cambridge, city staff and residents came together three years ago to form the Open Data Review Board, which recommends policies to govern datasets.

“As we start using more and more algorithms, we’re going to need oversight committees like the Open Data Review Board to be able to have the 35,000-foot view over this emerging field and understand how the pieces are working together,” said Josh Wolff, the city’s open-data program director.

In September, Nicklin, the Johns Hopkins researcher; along with researchers in San Francisco, Data Community DC, and Harvard University’s Data-Smart City Solutions, released an “Ethics and Algorithms Toolkit." It walks people through questions to consider to minimize ethical risks. Ghani and his fellow researchers at the University of Chicago this year released software cities can use to audit their data to look for biases.

What are some questions policymakers should ask about data and its use?

Officials should consider how and for what purpose data was collected and whether that aligns with how they are using the data, researchers said.

Some other questions they suggest policymakers ask: How can we evaluate the accuracy and equity of our data systems? How can we reduce opportunities for bias in the data? Are knowledgeable teams in place to work with the data?

“It’s overwhelming for us in computer science as well," said Stoyanovich, a member of New York’s Automated Decision Systems Task Force, “because there’s not just one way to do things correctly.”